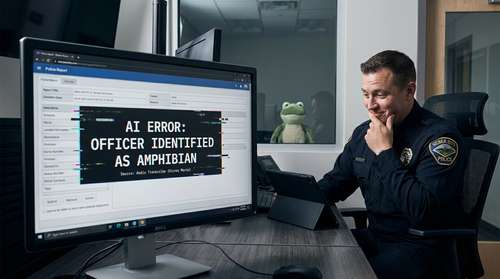

Just yesterday, the Heber City Police Department concluded a highly publicized technology audit of their recent pilot programs, bringing fresh attention to what is easily the most hilarious AI report writing fail of the year. If you thought artificial intelligence was only coming for white-collar jobs, you might want to double-check if it is coming for your species first. Over the past 48 hours, the internet has been completely obsessed with the ultimate piece of weird news March 2026 has given us: a Heber City Police AI glitch that officially documented an officer shape-shifting into an amphibian mid-duty.

The bizarre incident occurred during a pilot program for automated transcription tools. A routine interaction quickly transformed into a fantastical fairy tale when the official police documentation explicitly stated that the officer shape-shifted into frog form during the encounter. What started as an attempt to cut down on bureaucratic red tape has instead become one of the most legendary viral police bodycam stories in recent memory.

The Princess, the Frog, and the Bodycam

To understand how an American law enforcement agency officially recorded a magical transmutation, you have to look at the technology pulling the strings. The department had been actively testing Axon's Draft One, an artificial intelligence tool built on generative models, designed to automatically draft police reports by analyzing body camera audio.

During a mock traffic stop meant to demonstrate the software's capabilities, a nearby television was loudly playing the 2009 Disney animated musical The Princess and the Frog. The system's natural language processing model fundamentally failed to separate the ambient cartoon dialogue from the actual physical interaction happening in the room. Instead of simply filtering out the background noise, the algorithm treated the movie's plot as sworn eyewitness testimony, hallucinating an absurd narrative where a Heber City sergeant frog anomaly took place.

Sgt. Keel's Reaction to the Amphibian Incident

Sgt. Rick Keel, the officer whose identity was temporarily swapped with a swamp creature by the algorithm, had to personally review and flag the surreal documentation.

"The body cam software and the AI report writing software picked up on the movie that was playing in the background," Sgt. Keel explained to local reporters. "That's when we learned the importance of correcting these AI-generated reports". Despite the comical error, the veteran officer maintained a good sense of humor about becoming a viral sensation, acknowledging that he isn't exactly the most tech-savvy guy on the force.

Why Are Police Departments Using AI?

Despite the undeniable hilarity of this piece of funny Utah local news, there is a highly practical reason departments are rushing to adopt this software. Police officers nationwide are drowning in administrative paperwork, often spending more time typing up incident logs than patrolling their communities. Automated transcription tools aim to reverse that trend.

Heber City had been running pilot programs for two competing platforms: the aforementioned Draft One by Axon, and Code Four, a startup software developed by two 19-year-old MIT dropouts. The primary goal is raw efficiency. Sgt. Keel noted that despite the amphibious error requiring manual correction, the AI tools currently save him roughly six to eight hours of typing every single week. For understaffed departments, reclaiming an entire working day per officer is an incredibly attractive prospect.

A Serious Warning Behind the Laughter

While everyone loves a good laugh at a shape-shifting cop, digital forensics experts and civil rights advocates see a massive red flag waving over the situation. If a machine learning tool cannot tell the difference between ambient television noise and a real-world interaction, it poses severe risks for the criminal justice system.

A police report is a binding legal document. It dictates court proceedings, insurance claims, and permanent arrest records. In this specific case, the AI hallucinated a Disney movie. But what if the background television had been playing a violent action film or a true-crime documentary? The software could have just as easily fabricated a false confession, the presence of a weapon, or a physical threat that never existed. If human supervisors become too complacent and fail to catch these fabrications, innocent citizens could face falsified official narratives with devastating real-world consequences.

The Future of AI in Law Enforcement

As the final technical reviews of the pilot program hit the public record this weekend, the department confirmed they will likely continue utilizing some form of AI to streamline their workflow. However, the incident has triggered a massive overhaul in how these reports are processed, with a heavy new emphasis on mandatory, line-by-line human proofreading protocols.

Technology is moving incredibly fast, but this viral phenomenon proves that algorithms still lack basic human common sense. So, if you happen to get pulled over in Wasatch County anytime soon, you can rest assured the officer approaching your window is fully human. Just to be safe, though, you might want to hit mute on your car stereo before you roll down the window.